Runtime

2025

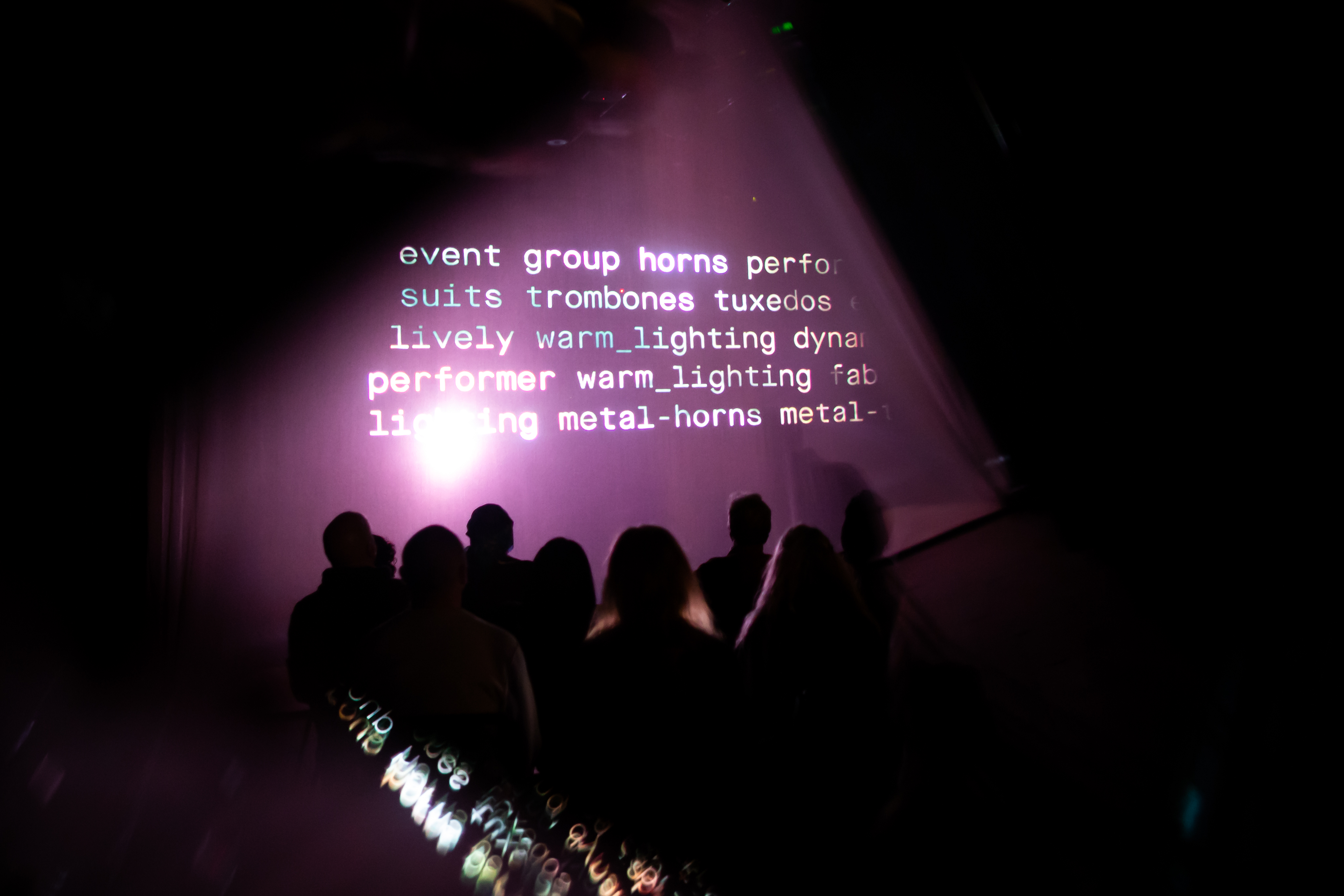

A performance in two threads.

runtime centers on memory as data and composition as sequencing logic. The piece unfolds across parallel systems: one structural, one atmospheric. Their interaction generates form in real time. runtime is not a fixed work — it is a living system in execution. Each performance is the result of an active recomposition of personal archive material, processed through generative logic and real-time sequencing.

Video

Documentation

Concept

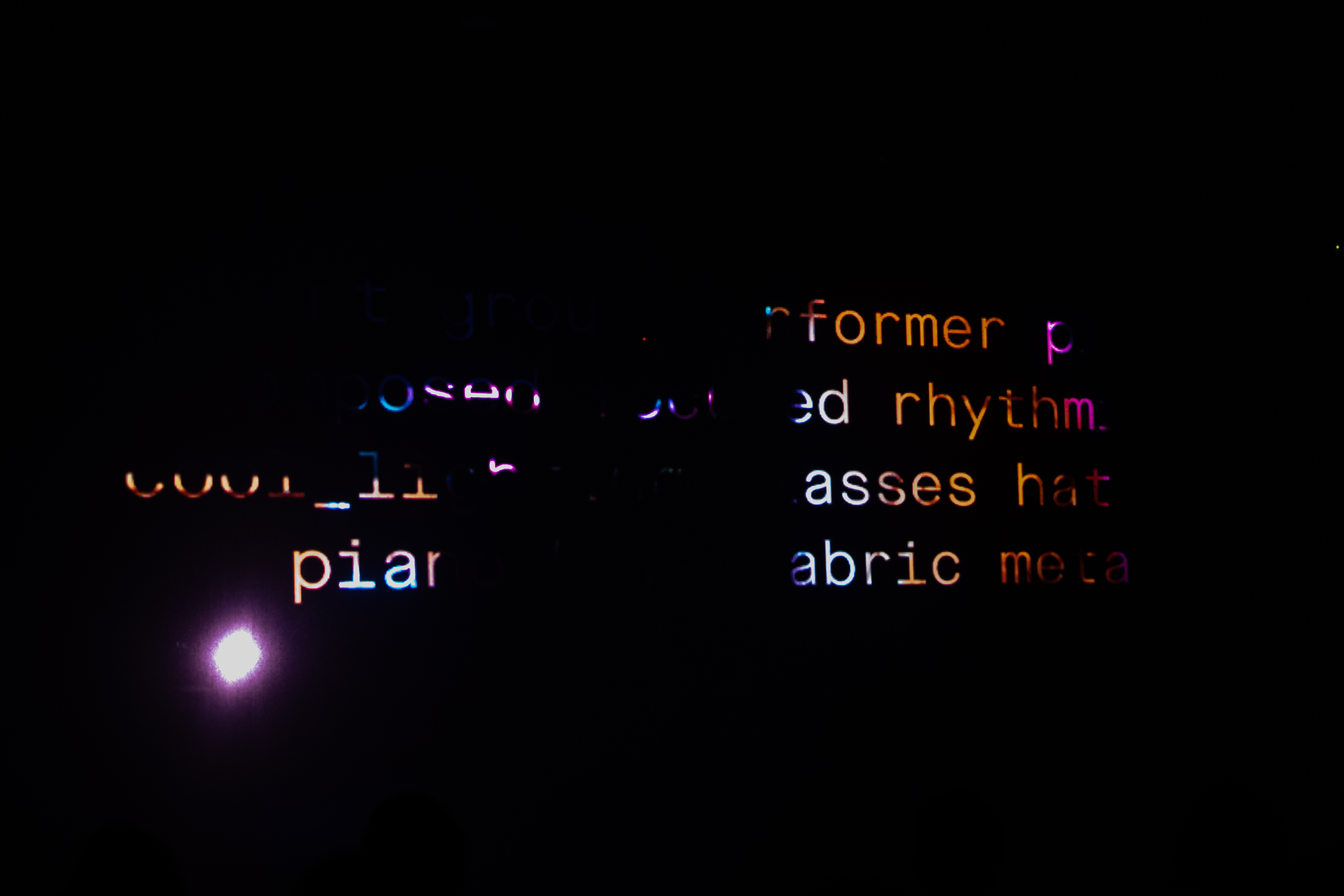

What does it mean for a performance to execute? When does memory become a callable function? runtime treats archive material, generative systems, and live composition as active processes rather than static media. Memory in runtime behaves less like a file and more like living code: retrieved by semantic condition, not filename; recombined through procedural logic; responsive to real-time triggers. Two operational threads drive the piece: Thread A — Structural: A generative sequencing engine that defines duration, movement boundaries, and phase transitions. Thread B — Atmospheric: A responsive layer where audio and image drift introduce variation, texture, and mood shifts. Form emerges from the friction and coherence between these threads.

System / Method

- Archive as living dataset — personal video archive (thousands of fragments, decades) transformed into structured data through analysis and tagging; assets annotated with machine-generated features, AI-enhanced descriptive tags, semantic embeddings for search and retrieval

- AI-assisted metadata engine — BLIP-2 and GPT-4 for visual descriptors, caption validation, rich descriptions, semantic embeddings; supports content-driven composition logic instead of manual cueing

- Sequencing logic and movement phases — five phases: Orientation, Elemental, Built, People, Blur; each with custom selection criteria, compositional rules, generative audio behavior; transitions driven by temporal progression, threshold-based triggers, real-time intervention, cross-thread conditioning

- Multi-screen and playback architecture — TouchDesigner for real-time playback and layer blending; playback instructions from CSV schedules; visual outputs collide, echo, refract across zones

- Audio as generative layer — Ableton Live with BlackHole routing and custom Max for Live patches; each movement's sonic structure interacts with video metadata for responsive audio shaping; sound as conditioned agent of atmosphere

- Technical stack: Python (data processing, AI tagging), FFmpeg (video backbone), TouchDesigner (visual engine), Ableton Live + Max for Live (generative audio), BlackHole (inter-app routing)

- Performance states: Initialization, Drift, Compression, Recall, Saturation, Dissolve — triggered by timed progression, structural milestones, metadata resonance, live performer input

Spatial Experience

Shifts occur without announcement. Threads overlap. Patterns surface and dissolve. The audience experiences both narrative drift and structural precision. Rather than telling a story, runtime executes a condition space — a reactive environment shaped by memory, movement, and modulation.

Documentation Notes

Archive philosophy: The archive is not storage — it's a field of potential. Each performance recalibrates that potential through new tag relationships, expressive threshold mapping, emergent schedules, context-sensitive recall. Nothing is replayed; everything is reactivated. Setup and workflow: Configure environment (Python, FFmpeg, TouchDesigner, Ableton Live, BlackHole) → Data processing (video conversion, scene segmentation, metadata generation, embeddings) → Schedule generation (movement assignment, CSV output) → Execution (TouchDesigner rendering, Ableton Live synthesis). Presented by Groupwork and Torn Space Theater. Supported by the Cullen Foundation and the National Endowment for the Arts. Licensed under the Creative Commons Attribution-NonCommercial-ShareAlike 4.0 International License.